In the previous posts, I cover a lot of things around OSINT. There are, however, still some techniques and ideas which were kept untold. I kept them for this post because I feel they are mostly related to organizations. That being said, don't let this stop you from using them elsewhere. This post demonstrates them in the context of organizations. As always, I present you with some limited framework:

- I am interviewing for/joining/doing business with the organization; I want to find some information around them.

- I am doing security assessment/bug bounty on the organization; I want to find some technical details.

Employee Reviews

An organization is as good as its employees. With that being said, employees also like to write an anonymous review of their company. This is particularly useful when you are considering joining the company or looking to find the salary to ask. The most popular site for that is Glassdoor Company Reviews. Note that you will be required to log in before viewing all reviews. A similar site for reviews is Indeed.

(Review of Apple Inc. on Glassdoor.com)

Business type information about a company tends to be specific to its country of registration, so I decided not to focus on that in this post heavily. There are aggregators such as opencorporates.com. I recommend checking OSINT framework as its Business Records for specific search provider.

Technical Stack

From the eyes of pentester, knowing the technology stack of the organization is a valuable thing to have. You want to maximize your efforts, so knowing for instance which antivirus or outbound proxy is the company using might help you with structuring your attack. I like to do multiple things to find out these things.

-

Look for job postings. They usually include the required skills or experience for the position. Look for technical positions. You can see the job postings in several ways. One good idea is LinkedIn Jobs. There are also job posting sites like Indeed or Monster Job. I recommend using Google dork to find all possible sites:

"<ORG_NAME>" intext:career | intext:jobs | intext:job posting. Often, company is listing job postings on its website. The idea behind this technique is simple: Organizations tend to stay consistent and deploy the same products company-wide. -

Similar to the previous technique, look for (technical) employees of the organization (check the previous post) on LinkedIn. They will most likely have there certifications and skills up to date. Beware that the certifications could be acquired in the previous gigs, so I usually use this information to cross-validate the other methods.

-

Check stackshare.io. Some (mainly technical) companies share its stack publicly.

-

Use search engines. You should not limit yourself to job postings. There might be questions on StackOverflow or other similar sites from employees about specific products. These step will require more in-depth OSINT.

-

Metadata. Organizations often share documents publicly on their website. You can leverage the fact, that popular business products such as Microsoft Office or Adobe Reader by default append metadata to the files. This metadata contains things like author's name, date, and most importantly software type and version. You can target the old version of some software with client-side exploit. The best part is that since the metadata often contain author's name, you know who is your primary target. If you are interested in this topic, I highly recommend this post written by Martin Carnogursky.

-

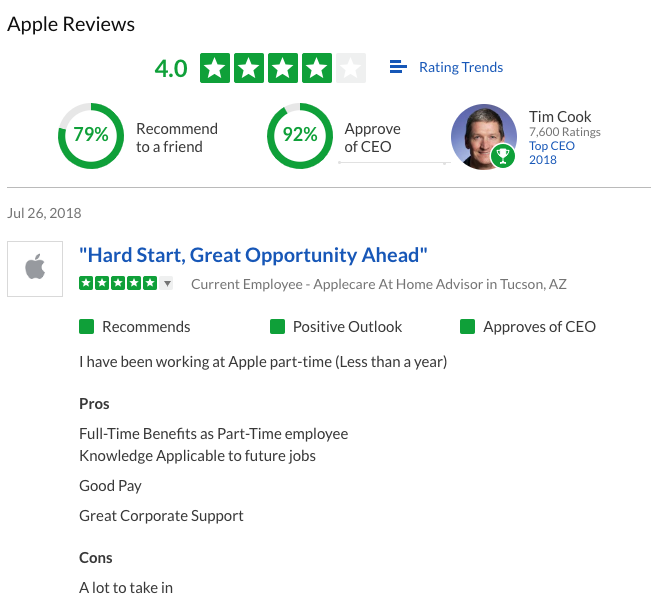

Fingerprint on your own. This part is a little tricky. Mainly, you want to find out what is running inside the network without being in the network (note that external scans will most likely not tell you what web proxy is being deployed). There is, however, one method for that, although I would still label it as experimental. The method is called DNS Cache Snooping.

The basic idea is this: You will check the organization's DNS cache to see, whether there were some previous requests to some specific domain. Why is it useful? Imagine the antivirus. It downloads the new signatures periodically. For instance, some updates from McAfee some from domain download.nai.com. You can use non-recursive DNS request to organization's DNS server to check whether this domain is in its cache or not. However, external and internal DNS servers must be shared (or at least their cache) and still, having a cache hit might be only a false positive. That's why I called it experimental. Let's look at a diagram that might make things more easy for you (or not):

You can execute the non-recursive DNS query using this dig command:

dig @DNS_SERVER -t A DOMAIN_TO_CHECK +norecurse

By external DNS server, I primarily mean DNS servers serving organization's website(s), in other words:

dig -t NS MAIN_DOMAIN

Another problem is that you need to know the domains of products (a.k.a snooping signatures). There is a project such as DNSSnoopDogg. However, they are not updated for some time.

Public Secrets

The last of this post and probably my most favorite are public secrets. It is incredible, what organizations share publicly without them realizing it. This post in fact already covered two categories: Metadata and Exposed services.

There are, however, other types of public secrets. Firstly, there are secrets committed to git repositories. This usually happens by accident when developers work with code which has API keys or passwords hardcoded in the source code. When such code is committed to a git repository, it stays in its history even if the secret is later deleted (not purged). There are two projects which I use for scanning git repositories: gitleaks and truffleHog.

Similarly, paste sites are a gold mine for secret data. Developers tend to share code using these sites, and they don't tend to look on security aspect - often submitting code with included secrets. If you want to dig deep into this, I recommend using PasteHunter. It is a project which periodically checks popular paste sites and runs YARA signatures to check, whether it contains interesting strings. Alternatively, you can use ad-hoc methods such as using Google dorks: site:pastebin.com ORG_DOMAIN.

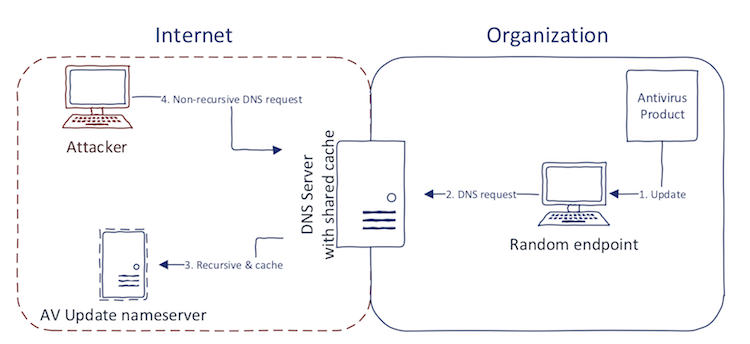

Lastly, I want to mention public S3 buckets. Recently, there were multiple cases where sensitive information was hosted in public S3 buckets. An S3 bucket can be configured as public which developers often opt to because it is easier to work with. The problem is that once the bucket name is discovered all its content can be seen by anybody without authentication. From the tools, I recommend bucket-stream which is a CLI tool. I also highly recommend a new tool which can be seen as Shodan for S3 - buckets.grayhatwarfare.com.

Part 4 of OSINT Primer will deal with Certificates. Stay tuned on Twitter to get it first.

Parts in this series:

OSINT Primer: Domains

OSINT Primer: People

OSINT Primer: Organizations

Until next time!